The Safety Case for Data Quality: Why Your Annotation Pipeline Is a Safety-Critical System

There is a standard assumption embedded in most automotive safety cases: the training data is a given.

The sensor hardware gets ASIL decomposition. The perception algorithm gets verification and validation. The system–level behaviour gets SOTIF analysis. But the data used to train the perception model – the annotated ground truth that teaches the system what a pedestrian looks like at night, in rain, partially occluded by a parked van, not a variable. It's assumed to be adequate. It's rarely documented as safety–critical.

That assumption is wrong. And as ISO 26262, SOTIF, and Euro NCAP 2026 tighten their requirements, it is becoming an expensive assumption to hold.

Your annotation pipeline is a safety–critical system. Not metaphorically. Structurally, in the same sense that your braking actuator and your sensor fusion module are safety–critical. Here is what that means for how you design, audit, and govern it.

The Hidden Variable in Your Safety Case

Consider how a perception model failure actually happens in a deployed ADAS system.

A camera misclassifies a motorcyclist at a junction. The fusion model, receiving inconsistent signals from LiDAR and camera, falls back to a lower–confidence detection. The planning system, uncertain, brakes late. The outcome depends on the margin you had.

Now trace that failure back to its origin. The most common root causes are not hardware defects or algorithm bugs. They are:

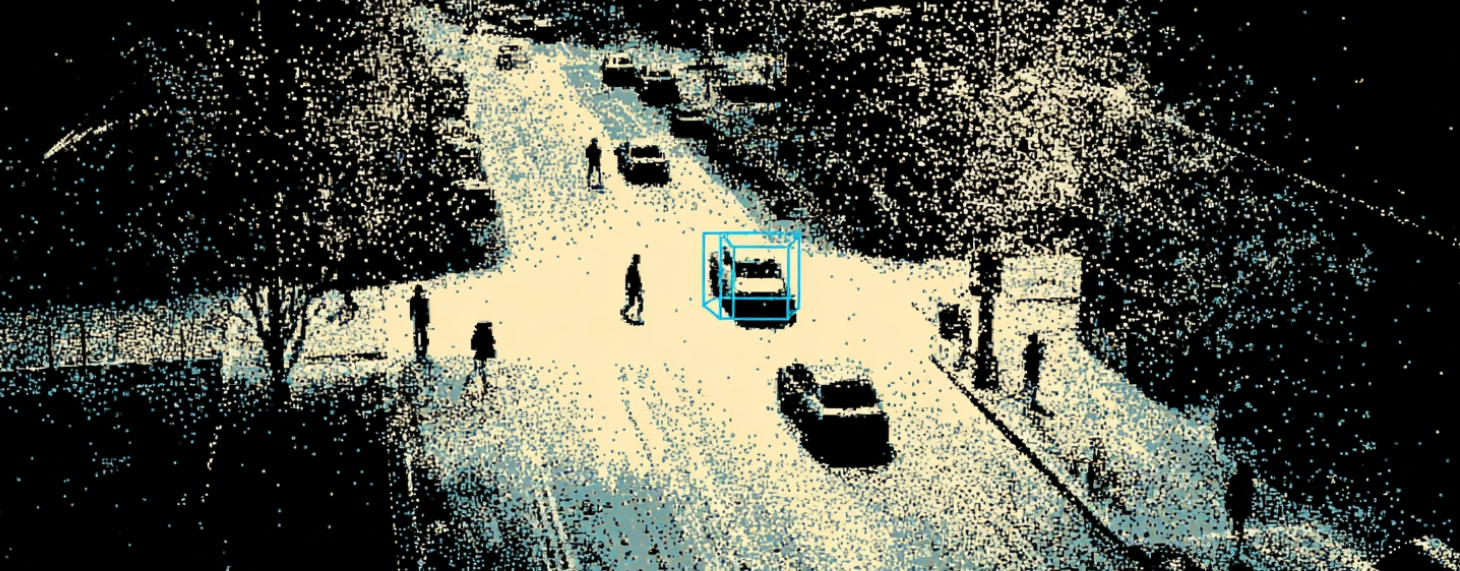

- Annotation errors – bounding boxes that do not tightly fit the object, wrong class labels for edge–case objects, missing annotations for partially visible targets

- Coverage gaps – training datasets that do not include enough examples of rare but critical scenarios (night, adverse weather, unusual pedestrian behaviour, junction geometries)

- Calibration drift – sensor misalignment that was present at annotation time, causing the model to learn from geometrically incorrect ground truth

- Cross–modal inconsistency – a pedestrian annotated in the camera frame that does not correspond to the correct point cluster in LiDAR, creating phantom detections or missed targets in the fusion model

None of these are hardware failures. None of them are algorithm failures. They are data failures. And they propagate silently through your pipeline until they surface – at validation, during testing, or in deployment.

A safety case that doesn't document and control these failure modes has a gap in it.

What the Standards Actually Say

ISO 26262

ISO 26262 defines safety–critical components as those whose failure could contribute to hazardous events. It requires systematic identification of failure modes, documented controls, and traceability from component to system–level safety goal.

Annotation errors are a failure mode of the data pipeline. They contribute to perception failures. Perception failures are a direct pathway to hazardous events. Under a strict reading of ISO 26262, the annotation pipeline sits inside the functional safety boundary – it just has not been treated that way in most organisations.

The standard doesn't yet prescribe annotation quality explicitly. But the logic of the framework demands it. When safety engineers ask what are all the ways this system can fail, and the answer doesn't include the training data was wrong, that analysis is incomplete.

SOTIF (ISO 21448)

SOTIF is more direct. It was written specifically to address failures that occur not from hardware malfunctions, but from functional insufficiencies – cases where everything works as designed, and the system still causes an accident.

Section 5 of SOTIF requires teams to identify and reduce "unknown unsafe" scenarios: situations your system hasn't been tested against and may not handle correctly. The standard explicitly includes the operational design domain (ODD) definition as a source of insufficiency – if your ODD does not match your training data coverage, you have a SOTIF gap.

Annotation quality and dataset coverage are not peripheral to SOTIF compliance. They are central to it. An annotation error that causes your model to fail in a scenario type it was supposed to handle is a functional insufficiency by definition.

Euro NCAP 2026

Euro NCAP's 2026 framework expanded from approximately 100 to 1,200+ test scenarios, adding night conditions, adverse weather, complex junction geometries, motorcycle interactions, and high–speed scenarios. Each of those new scenarios requires annotated ground truth. Each requires that ground truth to be accurate.

A team pursuing a 5–star rating in 2026 cannot do it with a 2022–era annotation strategy. The scenario coverage requirements alone demand a step–change in how annotation pipelines are designed and governed.

Three Ways Annotation Errors Become Safety Failures

1. Geometric Errors → Wrong Velocity Estimation

A 3D bounding box that is slightly too large, or not tightly fitted to the vehicle it contains, will produce an incorrect centroid. The tracking algorithm, using centroid movement across frames to estimate velocity, will return a wrong number. A vehicle that is decelerating may appear to be stationary. A vehicle that is accelerating may appear to be moving more slowly than it is.

At highway speeds, a velocity estimation error of even 5 km/h can change a safe gap into a collision scenario. The annotation error was fractions of a point cloud unit. The consequence was a late intervention.

2. Coverage Gaps → Unknown Unsafe Scenarios

If your training dataset contains 50,000 examples of pedestrians in daylight and 200 examples of pedestrians in heavy rain at night, your model has not learned the night–rain case. It has seen it, briefly, but not learned it.

When that scenario appears in the real world – which it will – the model operates in territory it does not understand. It may still produce a detection. The confidence score may even look reasonable. But the model's internal representation of that scenario is fragile, built on too few examples to generalise correctly.

This is exactly the unknown unsafe region SOTIF is designed to address. And the cause is not algorithmic – it's a dataset coverage decision.

3. Calibration Drift → Cross–Modal Corruption

Calibration defines the geometric relationship between your sensors. When a LiDAR unit and a camera are correctly calibrated, a point in the point cloud maps precisely to the correct pixel in the camera image. Annotations made with that calibration are geometrically accurate.

When calibration drifts – due to vehicle vibration, temperature cycling, or physical impact - the mapping breaks. Annotations made against a drifted calibration are systematically wrong. The model trains on geometrically incorrect data. The error is not random noise; it is a consistent offset that teaches the model to perceive the world slightly incorrectly.

Deepen's targetless calibration reduces calibration time from hours to seconds and detects drift proactively. But the principle applies regardless of tooling: calibration quality is a precondition for annotation quality, and annotation quality is a precondition for model safety.

What a Safety–Grade Annotation Pipeline Actually Looks Like

A safety–grade pipeline has five properties that distinguish it from a standard labeling operation:

1. Calibration as a gate, not a step

Annotation doesn't begin until calibration has been verified for the current session. Calibration quality thresholds are defined and documented. Any session that fails calibration check is flagged before annotation begins, not discovered during QA.

2. Defined annotation quality thresholds by object class and scenario type

Different objects in different scenarios have different safety implications. A tightly fitted bounding box on a pedestrian at a junction matters more than one on a distant background vehicle. A safety–grade pipeline applies differentiated quality thresholds – and tracks performance against them per class and per scenario.

3. Cross–modal validation as a mandatory step

Every annotation is validated for consistency across sensor modalities before it enters the training set. a pedestrian annotated in LiDAR must correspond to the correct detection in the camera frame. Inconsistencies are flagged and resolved, not averaged out.

4. Coverage tracking against the ODD

The pipeline tracks dataset coverage against a defined operational design domain. Scenario categories are tagged at annotation time (weather, time of day, junction type, object class distribution). Coverage gaps are visible and addressable before they become safety gaps.

5. Traceability from label to model output

When a model fails in validation, you can trace back to the training data that contributed to that failure. This requires labeling provenance – who annotated it, when, against which calibration state, reviewed by whom. Without this, root cause analysis of model failures is guesswork.

The Organisational Gap

Most annotation pipelines are owned by data engineering or ML operations teams. Most safety cases are owned by functional safety engineers. In the majority of organisations, these two groups have minimal overlap.

The safety engineer doesn't review annotation quality thresholds. The data ops team doesn't read the SOTIF analysis. The pipeline that connects training data to deployed model behaviour exists in the gap between them.

Closing that gap is not primarily a tooling problem. It is an organisational decision: to extend the scope of the safety case to include the data pipeline, assign accountability, and build the review processes to match.

But tooling helps. A platform that makes annotation quality measurable, calibration traceable, and coverage visible makes it possible for safety engineers to actually govern the data pipeline – not just trust it.

Conclusion

Your annotation pipeline is not support infrastructure. It is the system that determines what your perception model knows about the world. When it is wrong, systematically, quietly, invisibly, your model is wrong. And a wrong perception model in a safety–critical vehicle is a safety failure waiting to be triggered.

ISO 26262, SOTIF, and Euro NCAP 2026 are pushing in the same direction. The standards have moved further than most organisations' practice. The practical starting point is treating annotation quality not as an engineering hygiene metric, but as a line item in the safety case.

Deepen's unified platform – calibrate, annotate, validate as a closed loop – is designed for exactly this kind of pipeline. Book a demo to see it in practice.